What Is Speculative Execution?

With a new Apple security flaw in the news, it’s a good time to revisit the question of what speculative execution is and how it works. This topic received a great deal of discussion a few years ago when Spectre and Meltdown were frequently in the news and new side-channel attacks were popping up every few months.

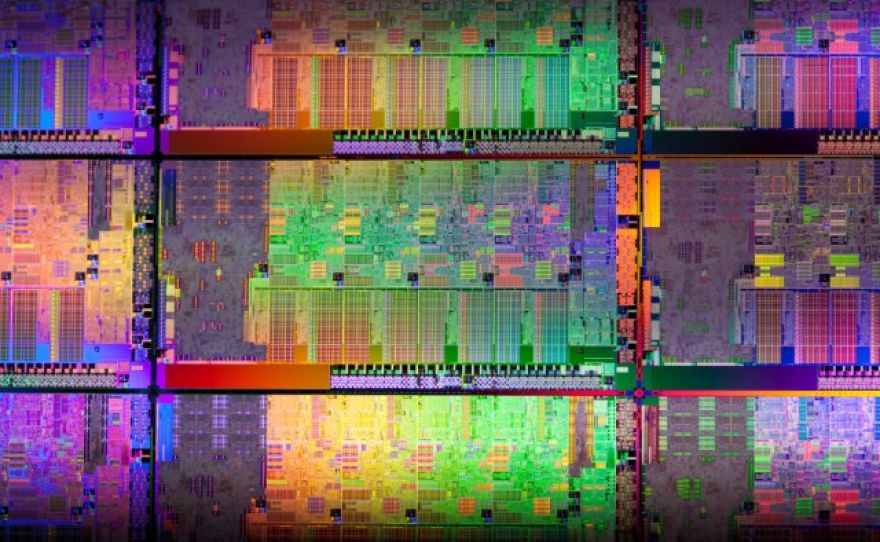

Speculative execution is a technique used to increase the performance of all modern microprocessors to one degree or another, including chips built or designed by AMD, ARM, IBM, and Intel. The modern CPU cores that don’t use speculative execution are all intended for ultra-low power environments or minimal processing tasks.

What Is Speculative Execution?

Speculative execution is one of three components of out-of-order execution, also known as dynamic execution. Along with multiple branch prediction (used to predict the instructions most likely to be needed in the near future) and dataflow analysis (used to align instructions for optimal execution, as opposed to executing them in the order they came in), speculative execution delivered a dramatic performance improvement over previous Intel processors when first introduced in the mid-1990s. Because these techniques worked so well, they were quickly adopted by AMD, which used out-of-order processing beginning with the K5.

ARM’s focus on low-power mobile processors initially kept it out of the OOoE playing field, but the company adopted out-of-order execution when it built the Cortex A9 and has continued to expand its use of the technique with later, more powerful Cortex-branded CPUs.

Here’s how it works. Modern CPUs are all pipelined, which means they’re capable of executing multiple instructions in parallel, as shown in the diagram below.

Image by . This is a general diagram of a pipelined CPU, showing how instructions move through the processor from clock cycle to clock cycle.

Imagine that the green block represents an if-then-else branch. The branch predictor calculates which branch is more likely to be taken, fetches the next set of instructions associated with that branch, and begins speculatively executing them before it knows which of the two code branches it’ll be using. In the diagram above, these speculative instructions are represented as the purple box. If the branch predictor guessed correctly, then the next set of instructions the CPU needed are lined up and ready to go, with no pipeline stall or execution delay.

Without branch prediction and speculative execution, the CPU doesn’t know which branch it will take until the first instruction in the pipeline (the green box) finishes executing and moves to Stage 4. Instead of having moving straight from one set of instructions to the next, the CPU has to wait for the appropriate instructions to arrive. This hurts system performance since it’s time the CPU could be performing useful work.

The reason it’s “speculative” execution is that the CPU might be wrong. If it is, the system loads the appropriate data and executes those instructions instead. But branch predictors aren’t wrong very often; accuracy rates are typically above 95 percent.

Why Use Speculative Execution?

Decades ago, before out-of-order execution was invented, CPUs were what we today call “in order” designs. Instructions executed in the order they were received, with no attempt to reorder them or execute them more efficiently. One of the major problems with in-order execution is that a pipeline stall stops the entire CPU until the issue is resolved.

The other problem that drove the development of speculative execution was the gap between CPU and main memory speeds. The graph below shows the gap between CPU and memory clocks. As the gap grew, the amount of time the CPU spent waiting on main memory to deliver information grew as well. Features like L1, L2, and L3 caches and speculative execution were designed to keep the CPU busy and minimize the time it spent idling.

If memory could match the performance of the CPU there would be no need for caches.

It worked. The combination of large off-die caches and out-of-order execution gave Intel’s Pentium Pro and Pentium II opportunities to stretch their legs in ways previous chips couldn’t match. This graph from a 1997 Anandtech shows the advantage clearly.

Thanks to the combination of speculative execution and large caches, the Pentium II 166 decisively outperforms a Pentium 250 MMX, despite the fact that the latter has a 1.51x clock speed advantage over the former.

Ultimately, it was the Pentium II that delivered the benefits of out-of-order execution to most consumers. The Pentium II was a fast microprocessor relative to the Pentium systems that had been top-end just a short while before. AMD was an absolutely capable second-tier option, but until the original Athlon launched, Intel had a lock on the absolute performance crown.

The Pentium Pro and the later Pentium II were far faster than the earlier architectures Intel used. This wasn’t guaranteed. When Intel designed the Pentium Pro it spent a significant amount of its die and power budget enabling out of order execution. But the bet paid off, big time.

Intel has been vulnerable to more of the side-channel attacks that came to market over the past three years than AMD or ARM because it opted to speculate more aggressively and wound up exposing certain types of data in the process. Several rounds of patches have reduced those vulnerabilities in previous chips and newer CPUs are designed with security fixes for some of these problems in hardware. It must also be noted that the risk of these kinds of side-channel attacks remains theoretical. In the years since they surfaced, no attack using these methods has been reported.

There are differences between how Intel, AMD, and ARM implement speculative execution, and those differences are part of why Intel is exposed to some of these attacks in ways that the other vendors aren’t. But speculative execution, as a technique, is simply far too valuable to stop using. Every single high-end CPU architecture today uses out-of-order execution. And speculative execution, while implemented differently from company to company, is used by each of them. Without speculative execution, out-of-order execution wouldn’t function.

The State of Side-Channel Vulnerabilities in 2021

From 2018 – 2020, we saw a number of side-channel vulnerabilities discussed, including Spectre, Meltdown, Foreshadow, RIDL, MDS, ZombieLoad, and others. It became a bit trendy for security researchers to issue a serious report, a market-friendly name, and occasional hair-raising PR blasts that raised the specter (no pun intended) of devastating security issues that, to date, have not emerged.

Side-channel research continues — a new was found in Intel CPUs in March — but part of the reason side-channel attacks work physics allows us to snoop on information using channels not intended to convey it. (Side-channel attacks are attacks that focus on weaknesses of implementation to leak data, rather than focusing on a specific algorithm to crack it).

We learn things about outer space on a regular basis by observing it in spectrums of energy that humans cannot naturally perceive. We watch for neutrinos using detectors drowned deep in places like Lake Baikal, precisely because the characteristics of these locations help us discern the faint signal we’re looking for from the noise of the universe going about its business. A lot of what we know about geology, astronomy, seismology, and any field where direct observation of the data is either impossible or impractical conceptually relates to the idea of “leaky” side channels. Humans are very good at teasing out data by measuring indirectly. There are ongoing efforts to design chips that make side-channel exploits more difficult, but it’s going to be very difficult to lock them out entirely.

This is not meant to imply that these security problems are not serious or that CPU firms should throw up their hands and refuse to fix them because the universe is inconvenient, but it’s a giant game of whack-a-mole for now, and it may not be possible to secure a chip against all such attacks. As new security methods are invented, new snooping methods that rely on other side channels may appear as well. Some fixes, like disabling Hyper-Threading, can improve security but come with substantial performance hits in certain applications.

Luckily, for now, all of this back-and-forth is theoretical. Intel has been the company affected the most by these disclosures, but none of the side-channel disclosures that have dropped since Spectre and Meltdown have been used in a public attack. AMD, similarly, is aware of no group or organization targeting Zen 3 its recent disclosure. Issues like ransomware have become far worse in the past two years, with no need for help from side-channel vulnerabilities.

In the long run, we expect AMD, Intel, and other vendors to continue patching these issues as they arise, with a combination of hardware, software, and firmware updates. Conceptually, side-channel attacks like these are extremely difficult, if not impossible, to prevent. Specific issues can be mitigated or worked around, but the nature of speculative execution means that a certain amount of data is going to leak under specific circumstances. It may not be possible to prevent it without giving up far more performance than most users would ever want to trade.

Now Read:

Check out our series for more in-depth coverage of today’s hottest tech topics.